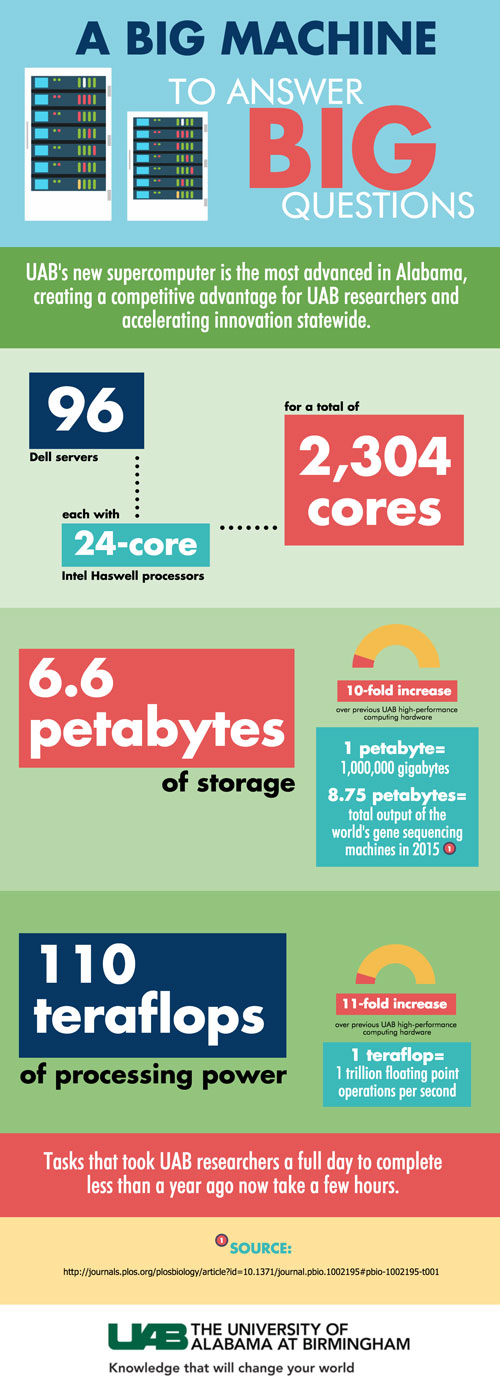

With its new high-performance computing cluster, the fastest supercomputer in Alabama, the University of Alabama at Birmingham can now execute tasks in a couple of hours that took an entire day with the equipment it had in 2015.

UAB officials were joined at a ribbon-cutting ceremony Wednesday by Dell EMC North America President of Commercial Sales Bill Rodrigues, as well as faculty, staff and students who will benefit from the supercomputer, to celebrate the significant leap forward in UAB’s research computing capabilities.

Vice President for Information Technology Curt Carver joined UAB in 2015 and has led aggressive investments in the institution’s IT infrastructure, which have increased the institution’s computing speed from 10 teraflops within the last year to 110 teraflops.

Problem solverUAB researchers using the new supercomputer weigh in on its advantages |

The supercomputer also takes UAB from 0.7 petabytes of research computing storage/memory to nearly 7 petabytes. Carver points to personalized medicine — using a patient’s own genetic makeup to allow more specific treatment of disease — to explain the significance of the increase. Personalized medicine was established as an institutional priority for UAB, considered to be the future of patient care and of eradicating disease; but sequencing a single person’s genome uses enough memory to nearly max out the institution’s previous capacity.

Nita Limdi, Pharm.D., Ph.D., MSPH, professor in the UAB Department of Neurology and the interim director of the Personalized Medicine Institute, says the supercomputer and continued advancements in IT are critical.

“Technology is vital to expanding our capabilities in personalized medicine, and our leadership has demonstrated a serious commitment to the future of medicine with these investments,” Limdi said.

UAB President Ray L. Watts established investments in the IT infrastructure as a key to supporting the institution’s strategic planning process.

UAB President Ray L. Watts established investments in the IT infrastructure as a key to supporting the institution’s strategic planning process.

“Dr. Carver and his team have exceeded our very high expectations in leading an aggressive expansion of our IT infrastructure for research, and what we have seen to date is just the beginning of his vision for UAB,” Watts said. “Our new capabilities will continue to attract and support top faculty, staff and students, make us more competitive to secure research funding, allow us to better care for our patients, and accelerate our world-changing discoveries.”

UAB has more than $500 million in annual research and development expenditures and secures more research funding every year than all other universities in Alabama combined, bringing high-impact innovations, as well as jobs and a tremendous economic benefit to Birmingham, Alabama and beyond. UAB is Alabama’s largest single employer and has an annual economic impact exceeding $5 billion in the state.

“Our students, faculty and staff are doing great things,” Watts said. “And our commitment to continued investment in our IT infrastructure will bring more and more opportunities to them that are available only at UAB.”

Supported by a 2015 Advance Alabama grant, and with funding from Watts and UAB’s Office of Research and Economic Development, as well as from many on-campus entities whose students and faculty will benefit from the supercomputer including the College of Arts and Sciences and schools of Engineering and Public Health, Carver’s team worked with Dell to secure the supercomputer.

“Dell EMC is proud to power higher-education institutions like the University of Alabama at Birmingham that use technology to address critical issues like improving health care,” said Bill Rodrigues, president of North America Commercial Sales, Dell EMC. “With their new Dell EMC HPC cluster, UAB researchers will have the compute and storage they need to aggressively research, uncover and apply knowledge that changes the lives of individuals and communities in many areas, including genomics and personalized medicine.”

The supercomputer — High Performance Computing Cluster at 110 Tflops, powered by Dell EMC — consists of a mix of Dell EMC PowerEdge 13th Generation Servers, all with Intel 24-core Haswell processors, 36x128GB RAM nodes, 38x256GB RAM nodes, 14x384GB RAM, four nodes with nVidia K80 GPU cards, and four nodes with Intel Xeon Phi cards. The HPCC is connected with Mellanox Infiniband FDR 56Gb/s high-speed switches for parallel computations and Dell Networking 40Gb Ethernet for storage access at 10Gb Ethernet to each node. The storage platform uses Data Direct Networks, providing 6.6 PB raw/5.2 PB usable capacity via Infiniband and 40GB Ethernet. Bright Computing Advanced Cluster Management software provides complete management of the cluster.

After major investments in information technology infrastructure — bringing the fastest supercomputer in Alabama to campus — UAB can now execute tasks in a couple of hours that took an entire day just a year ago.

After major investments in information technology infrastructure — bringing the fastest supercomputer in Alabama to campus — UAB can now execute tasks in a couple of hours that took an entire day just a year ago.